If You are Interested to learn about the Schema Validation in Mongodb

Sharding, MongoDB’s method for addressing the demands of data growth, is the process of storing data records across many machines. A single machine might not be able to store all of the data or offer an appropriate read and write throughput as the size of the data grows. Scaling horizontally is resolved by slicing. With sharding, you can increase the number of machines to handle the demands of read and write operations as well as data expansion.

Why Sharding?

- In replication, the master node receives all writes.

- requests with a high latency still require a master

- A single replica set is really only allowed to have 12 nodes.

- When the active dataset is enormous, memory cannot be large enough.

- Large enough local disc

- The cost of vertical scaling is very high.

Sharding in MongoDB

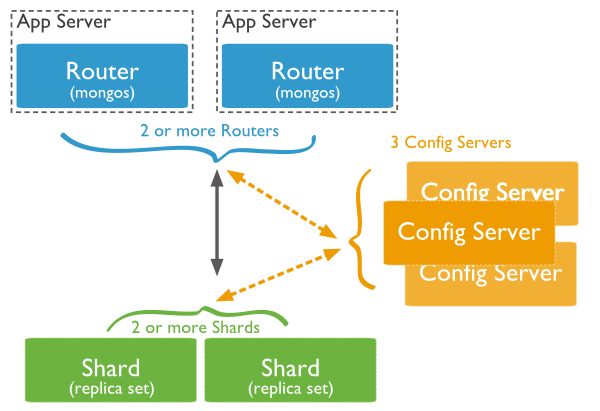

The following diagram shows the Sharding in MongoDB using sharded cluster.

In the following diagram, there are three main components −

- Shards −Data is stored in shards, which are. High availability and data consistency are provided. Each shard functions as a different replica set in a production environment.

- Config Servers-Config servers are used to hold the metadata for the cluster. The cluster’s data set is mapped to the shards in this data. This metadata is used by the query router to direct operations to particular shards. Sharded clusters in a production environment have precisely 3 configuration servers.

- Query Routers − Query routers, which are essentially just mongo instances, communicate with client programmes and route requests to the proper shard. The query router executes the operations, directs them to the appropriate shards, and finally provides the clients with the results. To spread out the strain of client requests, a sharded cluster may have more than one query router. One query router receives requests from a client. A sharded cluster often has a large number of query routers.

Shard Keys

The documents in the collection are split among the shards by MongoDB using the shard key. The field or fields in the documents make up the shard key.

Documents in sharded collections may no longer contain the shard key fields as of version 4.4. When distributing the documents across the shards but not when routing queries, missing shard key fields are viewed as having null values. See Missing Shard Key Fields for further details.

Shard key fields are required in each document for a sharded collection in versions 4.2 and before.

If you want to shard a collection, you choose the shard key.

You can reshard a collection starting with MongoDB 5.0 by altering the collection’s shard key.

As of MongoDB 4.4, you can improve a shard key by supplementing it with one or more fields.

The selection of shard key cannot be altered after sharding in MongoDB 4.2 and before.

The distribution of a document among the shards is determined by the shard key value.

If your shard key field is not the immutable _id field, you can edit a document’s shard key value as of MongoDB 4.2. For further information, see Change a Document’s Shard Key Value.

The value of a document’s shard key field is immutable in MongoDB 4.0 and earlier.

Cluster Key Index

A collection must have an index that begins with the shard key in order to shard it. If the collection does not already include a suitable index for the chosen shard key, MongoDB generates it when sharding an empty collection. Check out Shard Key Indexes.

Shard Key Technique

A sharded cluster’s performance, effectiveness, and scalability are impacted by the shard key selection. The shard key selection might cause a cluster with the best hardware and infrastructure to become sluggish. The sharding approach that your cluster can employ can also depend on the shard key and its backup index choices.

Chunks

Sharded data is divided into portions by MongoDB. Based on the shard key, each chunk has an inclusive lower and an exclusive higher range.

Balancer and Even Chunk Distribution

A balancer runs in the background to move chunks among the shards in an effort to achieve an equitable distribution of chunks across all of the cluster’s shards.

Advantages of Sharding

Reads / Writes

The read and write workload in the sharded cluster is distributed among the shards by MongoDB, allowing each shard to handle a portion of the cluster operations. By adding more shards, read and write workloads can be expanded horizontally across the cluster.

Mongos can direct a query to a specific shard or group of shards for queries that contain the shard key or the prefix of a compound shard key. When compared to broadcasting to every shard in the cluster, these focused operations are typically more effective.

Mongos can allow hedged reads beginning with version 4.4 of MongoDB to reduce latencies.

Storage Capacity

Sharding distributes data across the shards in the cluster, allowing each shard to contain a subset of the total cluster data. As the data set grows, additional shards increase the storage capacity of the cluster.

High Availability

The deployment of config servers and shards as replica sets provide increased availability.

Even if one or more shard replica sets become completely unavailable, the sharded cluster can continue to perform partial reads and writes. That is, while data on the unavailable shard(s) cannot be accessed, reads or writes directed at the available shards can still succeed.

Considerations Before Sharding

Sharded cluster infrastructure requirements and complexity require careful planning, execution, and maintenance.

Once a collection has been sharded, MongoDB provides no method to unshard a sharded collection.

While you can reshard your collection later, it is important to carefully consider your shard key choice to avoid scalability and perfomance issues.

TIP

To understand the operational requirements and restrictions for sharding your collection, see Operational Restrictions in Sharded Clusters.

If queries do not include the shard key or the prefix of a compound shard key, mongos performs a broadcast operation, querying all shards in the sharded cluster. These scatter/gather queries can be long running operations.

Starting in MongoDB 5.1, when starting, restarting or adding a shard server with sh.addShard() the Cluster Wide Write Concern (CWWC) must be set.

If the CWWC is not set and the shard is configured such that the default write concern is { w : 1 } the shard server will fail to start or be added and returns an error.

See default write concern calculations for details on how the default write concern is calculated.

NOTE

If you have an active support contract with MongoDB, consider contacting your account representative for assistance with sharded cluster planning and deployment.

Sharded and Non-Sharded Collections

A database can have a mixture of sharded and unsharded collections. Sharded collections are partitioned and distributed across the shards in the cluster. Unsharded collections are stored on a primary shard. Each database has its own primary shard.

Connecting to a Sharded Cluster

You must connect to a mongos router to interact with any collection in the sharded cluster. This includes sharded and unsharded collections. Clients should never connect to a single shard in order to perform read or write operations.

You can connect to a mongos the same way you connect to a mongod using the mongosh or a MongoDB driver.

Sharding Strategy

MongoDB supports two sharding strategies for distributing data across sharded clusters.

Hashed Sharding

Hashed Sharding involves computing a hash of the shard key field’s value. Each chunk is then assigned a range based on the hashed shard key values.

TIP

MongoDB automatically computes the hashes when resolving queries using hashed indexes. Applications do not need to compute hashes.

While a range of shard keys may be “close”, their hashed values are unlikely to be on the same chunk. Data distribution based on hashed values facilitates more even data distribution, especially in data sets where the shard key changes monotonically.

However, hashed distribution means that range-based queries on the shard key are less likely to target a single shard, resulting in more cluster wide broadcast operations

See Hashed Sharding for more information.

Ranged Sharding

Ranged sharding involves dividing data into ranges based on the shard key values. Each chunk is then assigned a range based on the shard key values.

A range of shard keys whose values are “close” are more likely to reside on the same chunk. This allows for targeted operations as a mongos can route the operations to only the shards that contain the required data.

The efficiency of ranged sharding depends on the shard key chosen. Poorly considered shard keys can result in uneven distribution of data, which can negate some benefits of sharding or can cause performance bottlenecks. See shard key selection for range-based sharding.

See Ranged Sharding for more information.

Zones in Sharded Clusters

Zones can help improve the locality of data for sharded clusters that span multiple data centers.

In sharded clusters, you can create zones of sharded data based on the shard key. You can associate each zone with one or more shards in the cluster. A shard can associate with any number of zones. In a balanced cluster, MongoDB migrates chunks covered by a zone only to those shards associated with the zone.

Each zone covers one or more ranges of shard key values. Each range a zone covers is always inclusive of its lower boundary and exclusive of its upper boundary.

You must use fields contained in the shard key when defining a new range for a zone to cover. If using a compound shard key, the range must include the prefix of the shard key. See shard keys in zones for more information.

The possible use of zones in the future should be taken into consideration when choosing a shard key.

TIP

Starting in MongoDB 4.0.3, setting up zones and zone ranges before you shard an empty or a non-existing collection allows for a faster setup of zoned sharding.

Collations in Sharding

Use the shardCollection command with the collation : { locale : "simple" } option to shard a collection which has a default collation. Successful sharding requires that:

- The collection must have an index whose prefix is the shard key

- The index must have the collation

{ locale: "simple" }

When creating new collections with a collation, ensure these conditions are met prior to sharding the collection.

NOTE

Queries on the sharded collection continue to use the default collation configured for the collection. To use the shard key index’s simple collation, specify {locale : "simple"} in the query’s collation document.

See shardCollection for more information about sharding and collation.

Change Streams

Starting in MongoDB 3.6, change streams are available for replica sets and sharded clusters. Change streams allow applications to access real-time data changes without the complexity and risk of tailing the oplog. Applications can use change streams to subscribe to all data changes on a collection or collections.

Transactions

Starting in MongoDB 4.2, with the introduction of distributed transactions, multi-document transactions are available on sharded clusters.

Until a transaction commits, the data changes made in the transaction are not visible outside the transaction.

However, when a transaction writes to multiple shards, not all outside read operations need to wait for the result of the committed transaction to be visible across the shards. For example, if a transaction is committed and write 1 is visible on shard A but write 2 is not yet visible on shard B, an outside read at read concern "local" can read the results of write 1 without seeing write 2.